A short reflection on my first month exploring Kaggle and learning through real data.

From Learning to Practice

It’s been seven weeks since my last post. During this time, I finished the Google Advanced Data Analytics Professional Certificate, which covers eight courses. It was a wonderful journey, where I was led step by step into the world of data science. The course content is thoughtfully designed, the instructors are superb, and the learning curve feels just right. I cannot express my gratitude to Google enough, and I feel very lucky to have found this series of courses.

After finishing the certificate, I wanted more exposure to real data analysis and more hands-on experience, so I joined Kaggle, a community for data scientists and machine learning engineers. (My Kaggle profile can be found here: Kaggle) Within a month, I have tried two competitions so far, both from the Playground Series, which features month-long tabular data competitions.

With some beginner’s luck, my code was upvoted and used, and I earned one silver and one bronze code medal. Both projects involve making probability predictions for classification tasks and are evaluated using the ROC AUC score. The February theme was predicting heart disease, and the March task is to predict customer churn for a telecom company.

Working with a Blank Canvas

These are my first experiences working with a completely blank canvas in data analysis, holding a brush in my hand. My biggest challenge when writing a notebook is forming a clear overall picture and designing a coherent pipeline.

I typically apply three to four models, each requiring slightly different feature engineering. This means I need to think in advance about what should be handled globally and what should remain model-specific. At the same time, I must ensure a proper validation strategy—such as train-test split or Stratified K-Fold—perform hyperparameter tuning with cross-validation, and avoid data leakage throughout the entire process.

Simultaneously, I pay attention to local details—such as formatting plots clearly, explaining how tree-based models behave (for example, whether they are shallow, learn quickly, or split easily), and interpreting results through coefficients or feature importance.

Along the way, I developed new skills, including CatBoost, RandomizedSearchCV (I later found that Optuna works more efficiently, but I hadn’t the chance of applying it yet), and StratifiedKFold, strengthened my feature engineering, and experimented with simple ensembling techniques such as averaging model outputs.

I still remember the first time I opened the Titanic project (a 101-level training project on Kaggle) six months ago. The code felt distant and difficult to understand. But now, everything on Kaggle has started to make sense. I am independently resolving real data problems and have completed notebooks that have been upvoted by several Experts and Masters.

Experimenting and Learning

Kaggle is a collaborative community where many participants share their code on competition pages. It’s interesting to draw inspiration from others’ ideas and run your own experiments.

For example, in the churn project, I explored a two-step modeling approach by adding a correction term to an initial model’s prediction.

The idea is as follows: I first train a model such as XGBoost or CatBoost to obtain predicted probabilities. Then, I compute the residuals and train a Ridge model on those residuals. Finally, I adjust the original prediction using the following transformation:

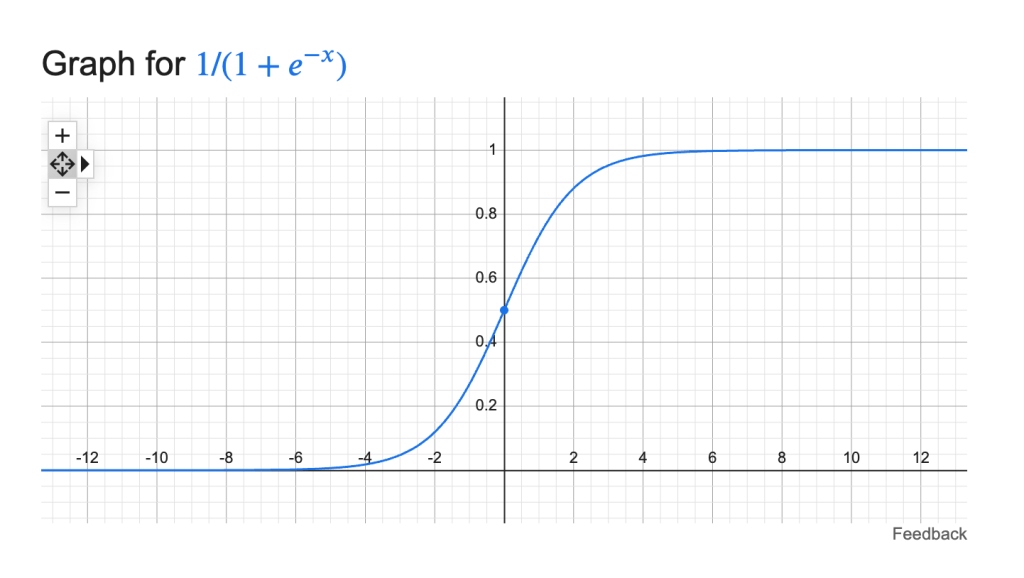

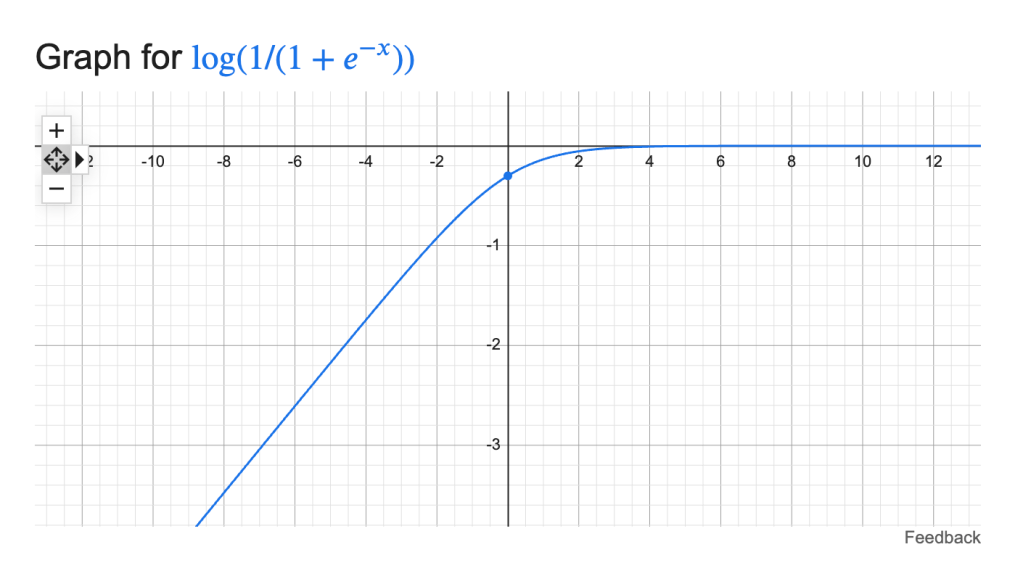

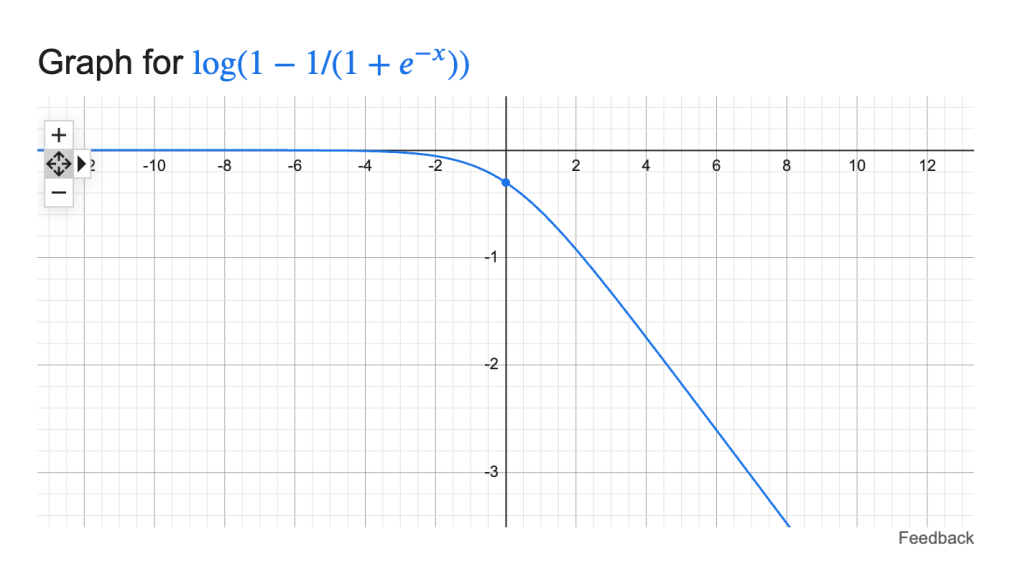

final = expit(logit(model1_prob) + α · ridge_resid_pred)

Here, logit and expit are standard transformations between probability space and log-odds space, and α is a tunable parameter controlling how much correction to apply. The key point is that ridge_resid_pred is not a probability, but a correction term that can be either positive or negative.

As it turned out, this second model on the residual did not provide a meaningful improvement, no matter how α was tuned. At first glance, the idea of exploiting residuals felt quite appealing. But after stepping back, I realized that this approach was largely redundant. For boosting-based models such as XGBoost, residual-like corrections are already learned stage by stage during training. In that sense, I was trying to manually add something the model had already done internally.

Still, I found the process valuable. Writing the code, testing the idea, and observing the results helped me develop a deeper understanding of how these models work. Rather than simply accepting a technique because it sounds reasonable, I learned to validate it through experimentation.

I also experimented with frequency encoding and seed averaging afterward. The improvements were marginal given the performance of my existing models.

This, again, was a useful reminder that added complexity does not always translate into better results—especially when the baseline is already strong.

Balancing Learning and Direction

Another topic that draws me into deeper thought is how I should best direct my efforts toward my career goal. My current goal is to start a career in data analysis, where the focus is on practical model application and interpretation.

However, I often find myself drawn to more advanced techniques. At times, I have started to notice a tension between data analysis and machine learning engineering, and between model interpretability and leaderboard-driven optimization. There are many appealing ideas I would like to experiment with, even though they may not be necessary for my future work.

For example, inspired by a leaderboard-winning solution, I was eager to design a pipeline that combines multiple models built on different feature engineering strategies, generates out-of-fold (OOF) predictions, and ensembles them using a Ridge meta-model. Such an approach could potentially improve my leaderboard score. However, at some point, I realized that designing this kind of pipeline is no longer just about building a model—it becomes the design of an entire system. I also began to recognize that an overly complex modeling pipeline can be difficult to apply in real business settings, where interpretability and transparency are often essential.

A similar tension appears in feature engineering. More complex transformations can sometimes improve model performance, but they are often difficult to interpret or explain in a business context. Features that are heavily engineered or abstract may lose their intuitive meaning, making it harder to connect model outputs back to real-world behavior or actionable insights.

After thinking more carefully, I felt that an improvement of 0.001 in ROC AUC is unlikely to justify the loss of clarity that comes with complex model stacking or overly engineered features.

For now, I will continue to focus on a data analysis–oriented approach, while exploring more complex pipelines like multi-model ensembling simply out of curiosity and for learning.

Looking Ahead

I will keep asking myself the following questions along the way:

- Am I becoming fluent in writing code?

- Do I truly understand the fundamentals of statistics and business through applying these models?

- Can I explain what I am doing clearly?

Looking ahead, I plan to:

- Continue working on a housing price prediction project on Kaggle. It focuses on value prediction, which is different from the classification problems I have worked on so far. It will involve different models, and with around 40 features, there will be more room to explore feature engineering. I expect this project to be especially exciting.

- Keep practicing pandas and SQL through LeetCode.

- Complete an A/B testing course on Udacity.

- Regularly summarize and review what I have learned.

I believe an idea is not fully understood until it can be explained clearly and precisely.

There is still a long way to go, but I feel that I am on the right path.